AI content hallucinations vs human bias: An editor’s double challenge

Let me tell you a secret. Editing used to be much simpler.

Not easier. Simpler.

A human wrote something. A human had blind spots. You corrected the blind spots. Everyone went home with their ego slightly bruised but intact.

Now I open a draft and think, “Is this a hallucination, a statistical compromise, or just Dave being Dave?”

Welcome to the era of AI bias in content.

It’s not just about machines getting things wrong. It’s about editors navigating two different species of distortion at the same time.

And if you think that sounds dramatic, you’ve clearly never tried to untangle a confident paragraph that cites a study which doesn’t exist.

Make yourself comfortable. This is the double challenge nobody warned us about.

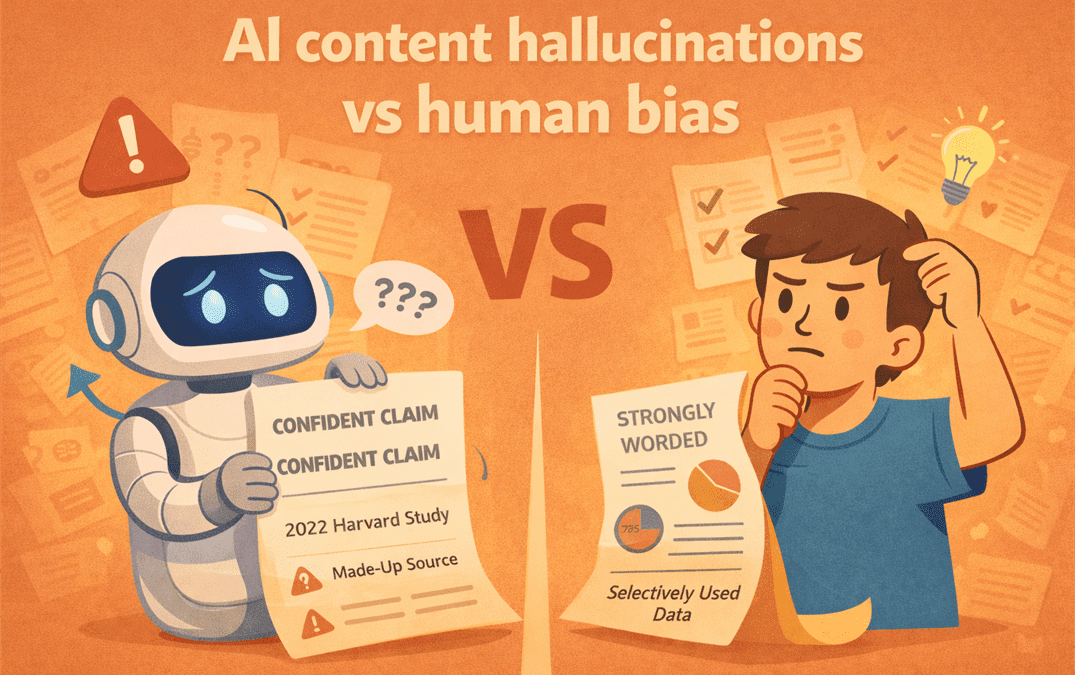

First opponent: AI hallucinations

Let’s define the obvious villain.

AI hallucinations happen when a model invents facts, sources, statistics, or context with total confidence.

It doesn’t hesitate. It doesn’t blush. It doesn’t add “I might be wrong.”

It just says, “According to a 2022 Harvard study…”

And you think, “Oh good, Harvard.”

Then you check. And Harvard has no idea what you’re talking about.

The tricky part is that hallucinations don’t always look absurd. They look plausible. They often follow the shape of truth.

Which makes AI fact-checking challenges far more subtle than simple error correction.

You’re not spotting typos. You’re verifying reality and that’s a different skill set.

Second opponent: human bias

Now let’s not pretend humans are neutral saints.

Human bias shows up as:

- Confirmation bias

- Selective evidence

- Emotional framing

- Strategic omission

- Strong opinions dressed as facts

If you’ve ever edited a thought leadership piece that quietly ignores inconvenient data, you know what I mean.

Human bias has texture. It has motivation. It often has ego attached. Which is why the debate about human vs AI bias misses the point.

It’s not a choice between flawed and flawless.

It’s a choice between different types of distortion.

AI bias in content isn’t neutral either

AI bias in content doesn’t usually look like wild invention. It looks like statistical smoothing.

AI is trained on massive datasets. It reflects patterns.

That means:

- Dominant narratives get amplified

- Minority perspectives get diluted

- Controversial positions get softened

- Language gravitates toward consensus

The result is content that feels balanced but often lacks tension.

If human bias leans too hard in one direction, AI bias leans toward the average.

As an editor, that average can be just as misleading as extremism, because neutrality without context isn’t accuracy. It’s compression.

The editor’s double challenge

So now you’re editing drafts that might contain:

- Human overconfidence

- AI overconfidence

- Human ideology

- AI statistical bias

- Made-up citations

- Perfectly phrased but conceptually empty paragraphs

It’s not just proofreading anymore. It’s investigative journalism with a red pen.

AI editorial judgement becomes less about style and more about epistemology.

Yes, I just used that word. I promise I’m still fun at parties.

The real question becomes, how do you build a workflow that respects both risks without paralysing production?

Where AI fact-checking challenges get real

Let’s talk practical friction.

AI will:

- Cite studies without links

- Blend real frameworks with invented details

- Generalise complex research into tidy summaries

- Confidently state outdated information

You need:

- Source verification

- Date checks

- Cross-referencing

- Context validation

That takes time.

If you assumed AI would eliminate research hours, you may discover it shifts them into verification hours instead.

And verification is slower than drafting.

Where human bias hides in plain sight

Now flip the lens.

A human writer might:

- Frame a case study to support a pre-decided conclusion

- Overemphasise personal experience

- Downplay contradictory evidence

- Use emotionally loaded language to tilt perception

The danger here isn’t hallucination. It’s persuasion masquerading as neutrality.

As an editor, you’re not just checking facts. You’re checking framing.

You’re asking:

- What’s missing?

- What alternative interpretation exists?

- Is this balanced or just rhetorically confident?

Human bias feels familiar. That makes it harder to spot.

Why human vs AI bias is the wrong framing

The popular debate goes something like this:

“Humans are biased. AI is objective.”

Or:

“AI is dangerous. Humans are more trustworthy.”

Both positions are lazy and quite wrong.

Human vs AI bias isn’t a duel. It’s a layered system.

AI inherits bias from training data created by humans. Humans interpret AI outputs through their own lens.

Editors then filter both through brand strategy.

Bias doesn’t go away. It builds up over time. The real job is figuring out where it first sneaks into your process.

Building stronger AI editorial judgement

If you’re serious about quality, you need deliberate systems.

Here’s what I’ve learned, usually the hard way.

1. Separate generation from validation

Never let the same session that generated the content validate the facts.

Treat AI as a drafter, not a verifier.

Run claims through independent sources. Use primary research when possible. If a citation looks impressive, assume it needs checking.

Trust, then verify. Always.

2. Mark assumptions explicitly

When reviewing drafts, highlight statements that:

- Generalise broad trends

- Attribute intent

- Predict outcomes

Ask, “What is this based on?”

If there’s no source or reasoning, it’s either AI smoothing or human assumption. Either way, it needs grounding.

3. Create a bias checklist

Before publishing, review for:

- Overconfident claims

- Missing counterpoints

- Unexamined data sources

- Framing that benefits one side without disclosure

You’re not aiming for sterile neutrality. You’re aiming for informed positioning.

That requires awareness of where both AI bias in content and human bias can creep in.

4. Encourage disagreement

This might be the most controversial part. Good editorial culture welcomes internal pushback.

If everyone nods at a draft, that’s a red flag.

Invite someone to argue with it. Ask what feels overstated. Ask what’s underexplored.

Bias hides in comfort.

The uncomfortable truth for editors

AI hasn’t removed responsibility from editors, it’s increased it.

You’re no longer just refining prose. You’re mediating between algorithmic pattern recognition and human perspective.

You’re guarding against hallucinations and guarding against ideology.

That’s the double challenge.

And if I’m honest, it’s exhausting some days. But it’s also interesting.

Because it forces us to think more clearly about what we mean by truth, authority and judgement.

Final thought from your editor

AI is not the villain. Humans are not the solution. Bias is not new.

What’s new is scale and speed.

AI can generate plausible distortion faster than any human ever could. Humans can inject selective framing with narrative finesse.

Your job, if you care about credibility, is not to pick a side. It’s to build systems that assume both are flawed.

AI bias in content is real. Human bias is persistent. AI fact-checking challenges are non-trivial. AI editorial judgement is not optional.

You don’t solve this with better prompts alone.

You solve it with better thinking, stronger review processes and a healthy suspicion of anything that sounds a little too tidy.

Including this paragraph.

Yes, I see what I did there.